Financial institutions need more than execution. They need a complete, durable operating record of AI decisions, controls, and outcomes.

Institutional traceability is where AI governance goes beyond a collection of separate controls and starts functioning as a coherent system of record.

A financial institution can have verified identities, traceable lineage, stable semantics, controlled model access, continuous evaluation, and automated policy decisions and still fail at the point that ultimately matters: being able to reconstruct what the system did, under which controls, for what reasons, with what evidence, and to what outcome.

If the records that answer those questions live in separate systems with no reliable way to connect them — identity in an access log, lineage in a catalog, evaluation in a monitoring dashboard, policy decisions in an event stream — the institution may have governance controls in place. It does not yet have a governed operating record.

That is why institutional traceability matters.

It is often described as audit logging or observability. Those framings are useful. They are not enough for governance.

In regulated financial environments, institutional traceability is the layer that makes AI governance legible: the ability to reconstruct any decision, show which controls were operating, surface what evidence existed, account for exceptions and overrides, and demonstrate that the system operated as governed.

By this point in the series, the institution can already execute governance decisions.

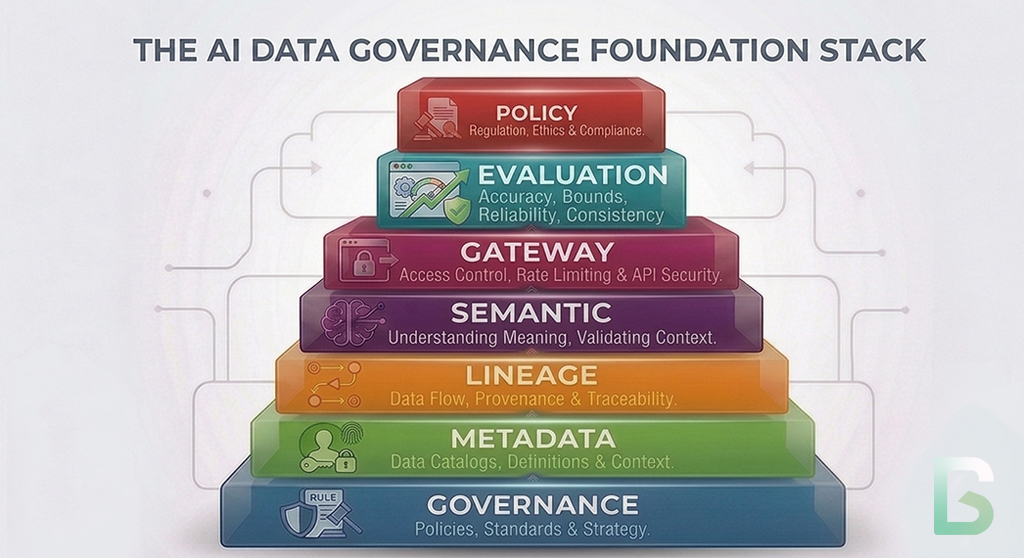

Identity establishes what exists and who is acting. Lineage establishes what can be trusted. Semantics establishes what things mean. Gateways establish what model access is allowed. Evaluation establishes whether behavior remains acceptable. Policy engines establish how governance decisions are executed consistently at scale.

That makes the environment observable, controllable, and accountable.

This is the next layer in the governance stack. Governance establishes authority. Metadata makes systems machine-operable. Identity resolves actors and artifacts. Lineage connects execution to evidence. Semantics stabilizes meaning. Gateways control model access. Evaluation measures whether behavior remains acceptable. Policy engines execute governance decisions. Institutional traceability connects all of those layers into a durable operating record that supports oversight, audit, incident response, and regulator engagement.

That is the shift from governed behavior to verifiable governance.

Without institutional traceability, the earlier layers may exist, but their outputs cannot be reliably connected.

With institutional traceability, every governance decision exists as part of an operating record that can be queried, audited, and defended.

Previously in the Series

This article builds directly on the earlier layers of the AI governance stack:

- Why Governance is the Precondition for Scalable AI Agents

- Metadata for AI Agents vs. Human Metadata

- Identity for AI Systems: The Glue That Holds AI Governance Together

- Data Lineage as the Backbone of AI Governance

- Semantic Layers: The Hidden Infrastructure Behind Scalable AI

- AI Gateways: The Control Plane for Model Access

- Agent Evaluation Systems: The Governance Layer for Autonomous AI

- AI Policy Engines: Automating Governance Decisions

Why Policy Automation Is Not Enough

Executing the right decision is not the same as having a reliable record that it was executed correctly.

Policy engines determine what the system is allowed to do next.

They do not, by themselves, produce a record that answers a different but equally important set of questions.

Can the institution reconstruct the full sequence of decisions leading to a specific outcome? Can it show which policy version was in effect at the time? Can it demonstrate that the evaluation signals informing the decision were current and complete? Can it account for overrides; who authorized them, for what duration, and whether they expired correctly?

Those are traceability questions.

They sit alongside policy execution, not downstream of it.

A policy engine can block, allow, route, restrict, or escalate an action. That is governance execution.

But if the record of that execution lives only in an event log disconnected from the identity trail, the lineage chain, the evaluation state, and the policy version, the institution cannot answer the question that regulators and second-line teams actually ask.

Not just: what happened?

But: what controls were in place when it happened, were they operating correctly, and what evidence supports that conclusion?

Why Fragmented Records Break Governance

Disconnected logs are not a governance record.

Most institutions generate substantial data about AI system operations. The governance problem is not typically absence of records. It is fragmentation.

No Single Reconstruction Capability

Identity records, lineage graphs, evaluation outputs, gateway event logs, and policy decisions each exist in their own system. When an incident occurs or an examiner asks a question, the institution has to manually correlate records that were never designed to be queried together.

That correlation takes time, introduces uncertainty, and may still leave gaps.

Policy And Evidence On Different Timelines

A policy decision made at 14:03 may be logged separately from the evaluation state that existed at 14:00, the lineage snapshot as of the prior morning, and the identity record from the session initiated at 13:55.

If those timestamps and contexts are not designed to link, the institution may have all the raw data and still be unable to reconstruct whether the decision was made correctly given the conditions that existed at the time.

Override Records That Cannot Be Verified

Exceptions and overrides are a routine part of any governance system. In a fragmented architecture, the record of an override may exist in a ticket, an email, a governance platform, and an application log as separate artifacts that cannot be reliably joined.

If the institution cannot show that an override was authorized, time-bounded, and properly expired, it cannot demonstrate that its exception process was controlled.

Evidence That Degrades Over Time

Records that are not designed for governance retention may be trimmed, rotated, archived, or purged before an audit window closes.

At that point, the institution does not have a gap in its logs.

It has a gap in its governance.

What Institutional Traceability Actually Does

Institutional traceability turns a stack of governance controls into a coherent system of record.

The common framing is still too narrow.

Institutional traceability is not event logging, audit trail tooling, or observability infrastructure, though it draws on all three. In a governed architecture, it is the capability that connects governance evidence across every layer into a record that can support reconstruction, review, and defense.

Its role is to ensure that the institution can answer, for any governed AI decision: who acted, on what, with what authority, under which controls, with what evidence, with what outcome, and whether any exceptions were in effect.

That makes institutional traceability governance-critical infrastructure.

SR 11-7: Guidance on Model Risk Management requires that model validation, outcomes monitoring, and governance decisions be documented in ways that support ongoing review and examination. As AI systems move from static models into agentic architectures, that documentation requirement extends to the full operating context not just whether a model was validated, but whether the institution can reconstruct how it behaved and what controls governed that behavior at the time of any given decision.

The NIST AI Risk Management Framework frames this similarly. Its Govern and Manage functions both emphasize accountability, the ability to demonstrate not just that risk was identified, but that it was managed in practice and that evidence of that management exists and can be produced.

The Capabilities That Matter

Good traceability systems produce records that are cross-layer, durable, and structured for reconstruction.

Decision Reconstruction

The central capability is not storage. It is reconstruction.

The institution should be able to take any governed AI decision — an action taken, a recommendation produced, a workflow escalated — and trace backward to the identity context, the lineage of the data used, the semantic definitions in effect, the gateway controls that applied, the evaluation state at the time, and the policy version that determined the outcome.

That is a different requirement from general observability.

Cross-Layer Correlation

Records from identity, lineage, evaluation, gateway, and policy systems should share enough common context — a shared trace identifier, a shared session or workflow reference, correlated timestamps — that they can be queried as a coherent record rather than assembled by hand.

Control State Capture

The operating record should include not just what happened, but what governance environment was in place when it happened.

That means capturing policy versions, active thresholds, evaluation scores, approval states, and gateway configurations at the point of the decision, not just the event log entry.

Override And Exception Documentation

Formal override records should be first-class objects in the traceability architecture.

An override record should contain the authorization decision, the authorizing party, the scope of the exception, the applicable time bounds, the rationale, and the expiry or review trigger.

That structure makes exception governance auditable rather than assertable.

Retention And Governance Of Records

The traceability architecture must treat its own records as governed artifacts.

Retention periods, access controls, completeness requirements, and integrity protections for governance records should be governed with the same rigor applied to the AI systems they document.

Queryable Evidence For Oversight And Examination

Second-line teams, internal audit, and examiners should be able to query the governance record without requiring custom data engineering on each request.

The operating record is only useful as governance infrastructure if it can be interrogated at scale.

How Traceability Closes the Loop

This is where the governance stack becomes defensible as a whole.

Institutional traceability is not independent of the earlier layers.

Identity tells the traceability layer who or what acted, under what delegation, and with what authorization state.

Lineage tells the traceability layer what evidence, data, and dependencies were in scope at the time of the decision.

Semantics tells the traceability layer that the terms, task types, and thresholds used in governance records are defined consistently across the estate.

Gateways tell the traceability layer what model access was permitted and under which controls.

Evaluation tells the traceability layer what the performance state of the system was when the decision was made.

Policy engines tell the traceability layer what governance decision was reached and which rule, threshold, context, and exception path produced it.

Institutional traceability connects all of those signals into a record that can be queried, reviewed, and defended.

That is the conceptual shift that matters.

Traceability turns evidence into records.

It turns records into a system of account.

It turns a system of account into verifiable governance.

Where Governance Actually Becomes Verifiable

Governance is not verifiable until an examiner, an auditor, or a second-line team can reconstruct a decision independently.

Once institutional traceability is operating as a layer, it supports real accountability:

- reconstruct any governed AI decision with its full control context, without custom data engineering for each request

- demonstrate that policy versions, evaluation thresholds, and approval states were correctly captured at the time of the decision

- show that overrides were authorized, time-bounded, and properly expired

- produce evidence for model risk examinations that connects model behavior to control state, not just to logged events

- support incident response by enabling rapid reconstruction of what the system did, under which conditions, and whether controls functioned correctly

- demonstrate to second-line oversight teams that governance controls were operating consistently across the estate

This is the practical difference between governance that is implemented and governance that is defensible.

Without institutional traceability, institutions may have controls but cannot demonstrate them coherently under examination.

With institutional traceability, they can show what was governed, how it was governed, and what the evidence is.

What Good Looks Like

The test is whether the institution can answer a governance question about any AI decision without assembling the answer by hand.

A Model Risk Examination Example

Consider an examination in which a regulator asks an institution to demonstrate that its servicing AI operated within approved limits during a specific quarter.

In a weak architecture, the institution can produce logs. It can show that a model gateway was in place. It can show that evaluation was running. But the records live in separate systems. Evaluation scores are in a monitoring platform. Policy decisions are in an engine event log. Identity context is in an access system. Lineage is in a data catalog. The institution’s answer to any connected question requires a data engineering effort to reconstruct a coherent picture.

The examiner is not asking for logs.

The examiner is asking whether the institution can demonstrate that governance controls were operating correctly for the specific decisions under review.

In a stronger architecture, the institution’s traceability layer connects those records. The query returns: the identity of the service and user context at the time of each decision, the lineage of the data used, the evaluation scores in effect, the policy version that governed the decision, the gateway controls that applied, and the full record of any overrides or exceptions, including their authorization, scope, and expiry.

At that point, the institution is not asserting that it was governed.

It is demonstrating it.

The Real Standard

You cannot defend governance you cannot reconstruct.

Institutional traceability matters because it transforms the governance stack from a set of controls into a verifiable operating system.

It is not optional record-keeping infrastructure. It is the mechanism by which institutions demonstrate that governance decisions were made correctly, that controls were operating as designed, and that exceptions were properly authorized and bounded.

That is the standard that matters in financial services.

It supports model risk management, regulatory examination, operational resilience, incident investigation, second-line oversight, and governance assurance.

An organization that executes governance decisions still does not have verified governance if it cannot reconstruct those decisions under examination.

It has an execution layer with an unverifiable record.

Institutional traceability is where AI governance becomes defensible.

What Comes Next

Every layer in the stack was built to enable something the previous layer could not provide. Together, they form an architecture.

Once institutions have traceable governance across identity, lineage, semantics, gateways, evaluation, policy, and operating record, a single question remains.

How do these layers work as a unified architecture and what does it take to build one?

Identity establishes who is acting.

Lineage establishes what can be trusted.

Semantics establishes what things mean.

Gateways establish what is allowed to be accessed.

Evaluation establishes whether behavior remains acceptable.

Policy engines establish how governance decisions are executed.

Institutional traceability connects all of those decisions into a durable operating record.

The final article will bring the full stack together: how the layers compose, where institutions typically begin, and what a governed AI architecture actually looks like when it is operating at scale.

Series: The Architecture of Governed AI Systems

- Governance is the Precondition for Scalable AI Agents

- Metadata for AI Agents vs Human Metadata

- Identity for AI Systems: The Missing Layer of AI Governance

- Data Lineage as the Trust Backbone of AI Systems

- Semantic Layers: The Hidden Infrastructure Behind Scalable AI

- AI Gateways: The Control Plane for Model Access

- How to Evaluate AI Agents: Building a Governance Framework

- AI Policy Engines: How to Operationalize AI Governance for Financial Institutions

- Institutional Traceability: The Operating System of AI Governance (coming next)

Follow the Series

We are continuing to explore the architecture required for governed AI systems. Upcoming articles will cover identity infrastructure, policy engines, and AI control planes.

Subscribe to the Data Sense newsletter to receive updates when the next article is published.